Formalizing Rubin's theorem

This last semester, I followed Pr. Laurent Bartholdi’s course on computer-assisted proofs in Lean, both because I wanted to get back into the field of computer proofs, and because I was excited at the idea of contributing to Mathlib, which is an impressively large collection of theorems and mathematical tools, all proven in Lean.

About a month into the lecture, Laurent handed me his work-in-progress formalization of Rubin’s theorem, which was still written for Lean 3, with the task to port it to Lean 4 and to make some progress with this formalization. Since then, I have successfully finished this formalization, and I am currently in the process of cleaning it up and slowly getting it merged into Mathlib!

In this blog entry, I will try my best to introduce the necessary knowledge required to understand what Rubin’s theorem is about, and then go into the interesting moments of the process of formalizing this theorem in Lean.

Groups, topologies and group actions

This is a crash course on the necessary concepts of groups, topologies and group actions to be able to formulate Rubin’s theorem, and hopefully develop an intuition for what it means.

These are structures that you most likely have already encountered somewhere, and they have a lot of interesting and useful properties besides the elementary ones listed here.

If you are already familiar with these concepts, then you can safely jump to the last section of this chapter.

Groups

Groups are a common structure that arises when studying objects like addition, multiplication, matrices or sequences of moves on a Rubik’s cubes. All four of these examples have a binary operation that can be cancelled out or “undone”, yielding a “do nothing” element (respectively , , the identity matrix and the empty sequence).

A group mirrors these properties in a more abstract and generic way, allowing us to forget about the specifics of how each of the examples above work. As such, every group is made up of four components, each mirroring one of the interesting aspects of these structures:

- The carrier set,

which we will refer to as . It contains all of the elements of the structure we wish to study.

- An identity element,

here written (or ). It represents the “do nothing” element.

- A binary operation,

here represented using the multiplication symbol . It represents the act of combining or chaining together two elements and to get a compound element , that does what does followed by what does.

Note: the left-to-right interpretation is also possible, but I will use the right-to-left interpretation, since this is how matrices naturally multiply together.

- The inverse operation,

which is often written . It represents the act of “undoing” an element. For instance, the Rubik’s cube move (also written ) undoes the move .

Then, these four components follow a couple of rules:

- Identity:

Multiplying by must do nothing, so for any , we have and .

- Associativity:

For any , we have . This essentially lets us forget about parentheses.

- Inverse:

For each element , multiplying it by cancels it out: and .

- Closure:

If and are in , then and are also in

There are many examples of groups in the wild, some of which you might already be familiar with. For instance:

- Integers, armed with addition: , , and

- Non-zero rational numbers, armed with multiplication: , , and

- Invertible, square matrices

- Sequences of moves on a Rubik’s cube, with representing the chaining of two sequences of moves, and undoing all of the moves in

I highly recommend watching 3Blue1Brown’s video on group theory for a more visual introduction to group theory, and Lingua Mathematica’s video on the associativity rule for a more intuitive interpretation of the group axioms.

Note that multiplication doesn’t need to commute: for any two elements and , is allowed.

This is notably the case for matrix multiplication, or on a Rubik’s cube (see example below).

In fact, we will see later that groups in which multiplication always commutes (also known as abelian groups)

cannot be used in Rubin’s theorem.

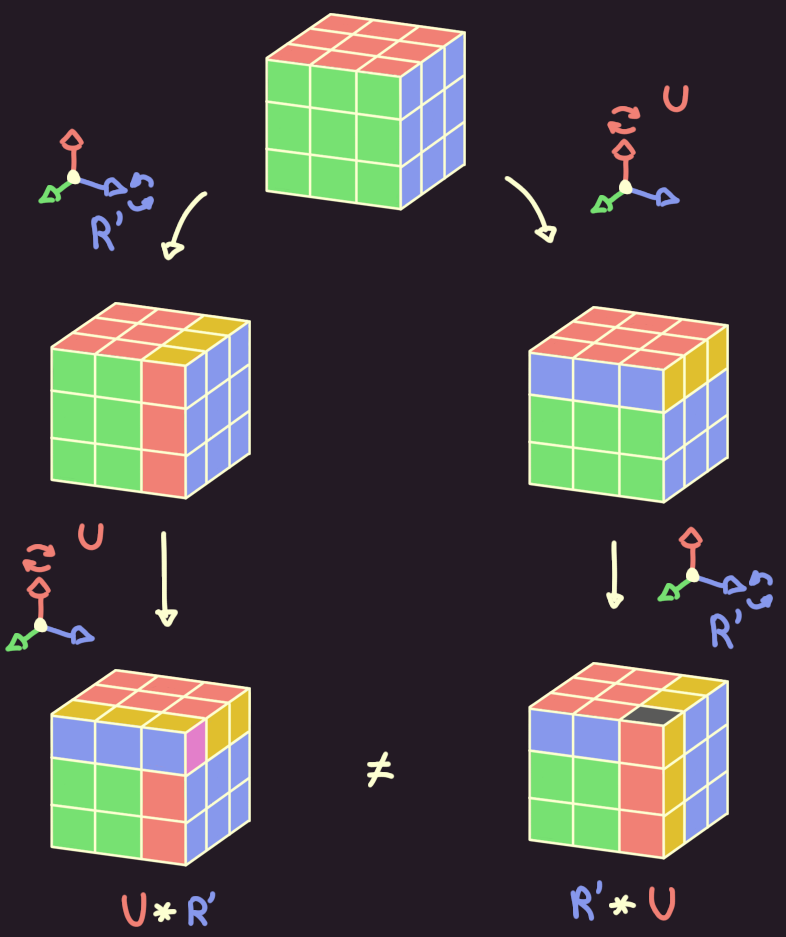

On a Rubik’s cube, when doing

On a Rubik’s cube, when doing U after R' (ie. U*R'), we get a different position than when doing R' after U (ie. R'*U).

Lastly a subgroup of is a subset of containing , and in which the multiplication and inverse operations are closed: and .

The subgroups of can be ordered with the relationship, and there are two subgroups found in every group:

- itself, which is the biggest subgroup of , and is therefore usually referred to as the top subgroup, or

- , which only contains ’s identity element . This is the smallest possible subgroup of , so it is also referred to as the bottom subgroup, or

Topological spaces

You might remember from calculus the concept of a continuous function: it is a function which takes as input a real number, and outputs another real number, such that you never have to “lift your pen” to draw the graph of this function.

However, this “pen”-based definition quickly falls short when we consider continuity for other kinds of functions:

- What does it mean for a function to be continuous on the rational numbers ? The “pen” always has to “jump” from one rational to the other, but that shouldn’t be a reason to discard the function as discontinuous.

- For functions that take as input two numbers instead of one, the “pen” would need to draw surfaces instead of lines, which is something that pens are usually incapable of doing.

- What about functions that operate on other functions? Translating a function (for instance, ) sounds like a continuous operation, but I doubt I would convince anyone if I said that I never lifted my “function pen” while doing so.

“Can you tell where I lifted my function pen?”

“Can you tell where I lifted my function pen?”

To remedy this, mathematicians came up with the concept of a topological space. Like groups, a topological space is made up of a few basic building blocks, which follow a set of rules:

- A set , called the universe.

- A collection of subsets of , referred to as or the open sets of .

- The empty set must be in : .

- itself must be in : .

- If and are both open, then their intersection must also be open: .

- If we have a collection of open sets (such that ), then the union of all of these sets must be open.

- A set is closed if is open.

In the case of the real numbers, contains all of the open intervals, and unions of open intervals.

and are both open sets, but and aren’t.

In fact, is closed, since is open.

Using this definition, a function is then said to be continuous if for every open set , the set of points that maps inside of must also be open: . For functions from to , this condition is equivalent to the pen-based definition.

The function is discontinuous, because for , isn’t open.

The function is discontinuous, because for , isn’t open.

Conditions on topological spaces

You might have noticed that the above definition for a topological space leaves a lot of freedom on the choice of ,

perhaps even too much freedom.

On the one hand, if (the trivial topology of ),

then the only open sets are the universe and the empty set, which is not very useful.

On the other hand, if (the discrete topology of ), then all the sets are open (and coincidentally closed), so every function from is continuous.

For this reason, we often impose some common, additional conditions that and must respect. Here are the ones that Rubin’s theorem requires:

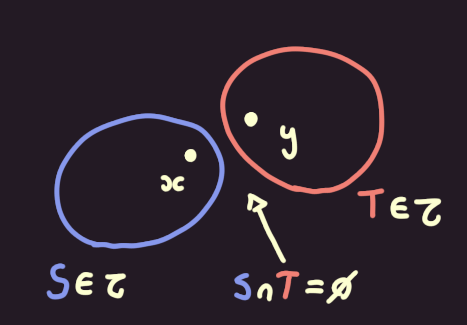

must be Hausdorff: for any two , you can find an open set around and an open set around , that do not intersect ().

For the reals, assuming that , the sets and satisfy this condition. A similar construction can be done for .

If is Hausdorff, then no matter how close and are, we can draw disjoint open sets around both of them.

If is Hausdorff, then no matter how close and are, we can draw disjoint open sets around both of them.

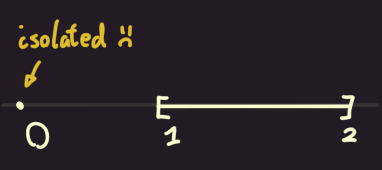

must not have any isolated points: for every , the set that only contains it, , must not be open. This condition essentially forbids us from choosing the discrete topology.

We saw before that for the reals, is never an open set (it is instead only a closed set), so has no isolated points.

In the topological space , the point is isolated.

In the topological space , the point is isolated.

must be locally compact: for every point , it should be possible to obtain a compact set in the neighborhood of ; that is, there is a set such that every sequence of points in must converge to a point in , and there exists a set such that and .

The reals are an example of a locally compact space, since every closed set is compact (for instance, any sequence in cannot converge to any number outside of that range). We thus simply have to take the interval to obtain a compact set in the neighborhood of some , and .

Interior and closure

There are two common operations in topology that allows one to obtain an open or a closed set from any arbitrary set :

- The interior of is the largest open subset of : and . It can be obtained by taking the union of all open subsets of .

- The closure of is the smallest closed superset of , it is the dual of the interior operation:

Note that the interior of may be empty if is too small, and the closure of can be itself if is too big.

Group actions

A group is said to acts on some set if, for every :

- For every , the result of the action of on (which we write ) is in :

- For every and , the action of corresponds to the compound action of and :

- The action of does not move :

An example of such a group action is the matrix-vector multiplication: when evaluating , we can first transform by and then transform it by , or we can compute once and then transform by .

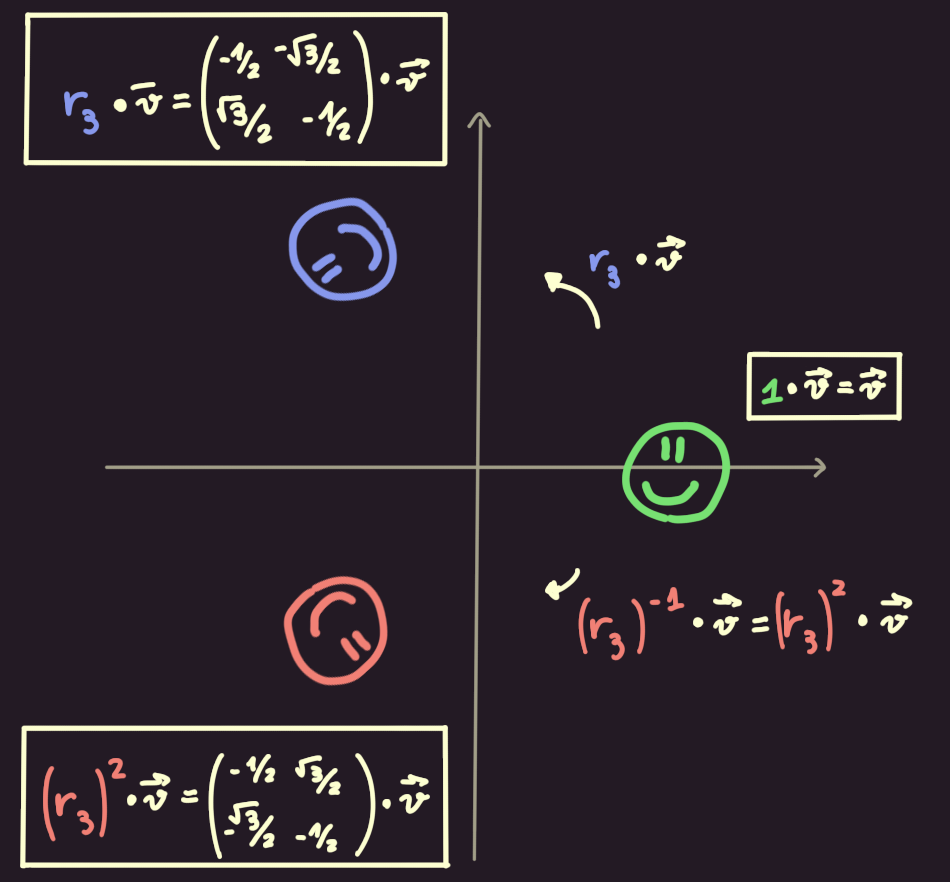

Another famous example of a group action is the action of the cyclic group by rotating points on a 2D plane:

The group action of corresponds to rotating by degrees.

The group action of corresponds to rotating by degrees.

Conditions on group actions

Just like with topological spaces, Rubin’s theorem requires that the group action respects a few additional rules, namely:

The action must be faithful:

for any , if and move points in the same fashion, then they are equal:

.

In other words, we can tell group elements apart simply by looking at how they move points in space.

The action must be continuous: for every , the function must be continuous.

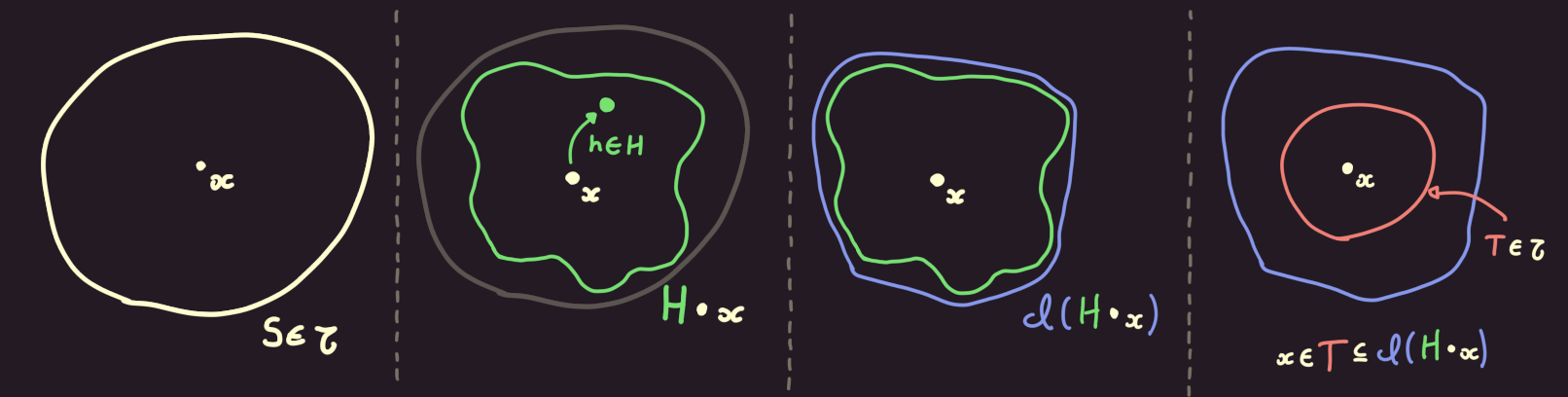

The action must be locally dense: this is a somewhat complex condition that is specific to Rubin’s theorem, but it can be summarized as follows:

- Pick an open set and some .

- Find all the elements that leave points outside of unmoved (); the set of all of these elements forms a subgroup of , which we will call .

- Construct , the set of all the points towards which gets moved by the elements of .

- Compute the closure of that set: .

- Then, there must exist an open set containing that is a subset of the previously-constructed set: and .

Essentially, this last condition requires that for arbitrarily small open sets , we must always find enough elements in to “fill in” enough of the space in around .

A visual representation of the condition that an action is locally dense.

A visual representation of the condition that an action is locally dense.

Homeomorphism

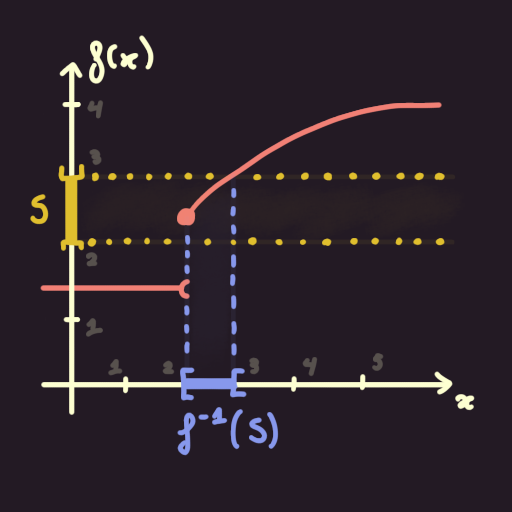

A homeomorphism is a function such that:

- is bijective: it maps one-to-one values in to values in

- is continuous

- is also continuous

Homeomorphisms are useful tools, since the open sets in can be obtained from the open sets in by mapping them through ,

and vice-versa ( and ).

As such, if one can construct a homeomorphism between two topological spaces,

then these two spaces will share a lot properties and be essentially topologically equivalent.

Rubin’s theorem

We can now finally state Rubin’s theorem!

An action of a group on a topological space is called a Rubin action if:

- is Hausdorff, has no isolated points and is locally compact (see Conditions on topological spaces above for a refresher).

- The action of on is faithful, continuous and locally dense (see Conditions on group actions).

Then, if we have two Rubin actions of on and of on , then there exists a homeomorphism that preserves the action of : .

The existence of this homeomorphism means that the two spaces and their respective group action by are essentially unique: we can losslessly go from one to the other.

Computer-assisted theorem proving

Computer theorem provers are programs that provide ways to express and verify mathematical theorems. The advantage of using such a theorem prover is that you reduce human error in the proof verification process to almost zero: as long as the theorem prover functions well, and your definitions are accurate, you can be guaranteed that your proof is 100% correct if the theorem prover accepts it.

The one downside when one wants to prove things using such a theorem prover, though,

is that one has to prove these mathematical statements to a computer.

No taking shortcuts, hand-waving details away or leaving lemmas as exercise to the reader;

everything needs to be proven thoroughly, from properties as simple as 1 + 1 = 2 to the

Fundamental Theorem of Algebra.

Theorem provers have been around for a few decades now, notably with Coq and Isabelle.

Lean is, in comparison, a relatively recent addition to the theorem prover landscape, as it was first released in 2013. Its main selling point is that it is easier to use (it provides a VSCode extension whose quality is on-par with rust-analyzer’s), and it powers the community-developped library, Mathlib, which aims to formalize as much of modern mathematics as possible.

While not being a central theorem, Laurent and I believe that Rubin’s theorem would be a neat addition to Mathlib, as it can be used as a stepping stone in a few already-existing theorems (J. Belk et al. have compiled a nice list of its applications in their article).

Our proof closely follows the proof by J. Belk et al, as it is the most concise proof of this theorem to date. However, as I started working on this project, all I had was a link to the paper and a 1000-lines long, unfinished proof, written for Lean 3, covering the first few lemmas of the paper. So, there was and still is a lot left to do to get to this project’s final goal.

Porting to Lean 4

The first official release of version 4 of Lean was only made a couple of months ago, but projects like Mathlib had been preparing themselves for the release and had already ported themselves over to the newer version. This meant that my first order of business was to port the existing proof over to Lean 4.